The 2026 AI Index Report is the kind of document that nobody reads all the way through, which is probably by design. It's published by Stanford's Institute for Human-Centered AI, it's free, and it's dense enough that most coverage of it ends up cherry-picking a few numbers and calling it a day. I read the parts that matter. Here's what I found.

AI is Getting Better Fast. Embarrassingly Fast.

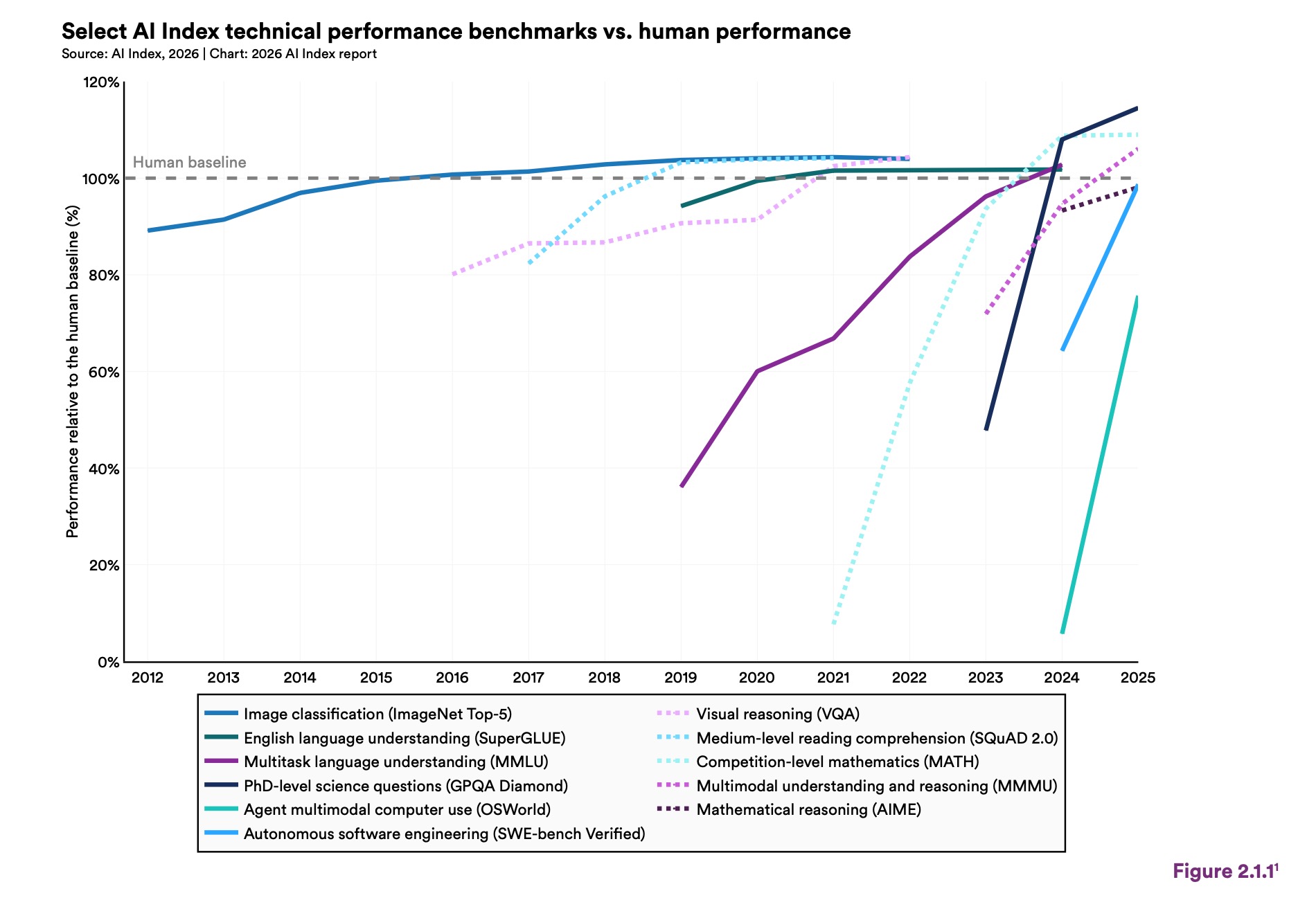

On a benchmark called SWE-bench Verified, which tests whether AI can solve real software engineering problems pulled from actual GitHub issues, performance went from 60% to near 100% of the human baseline in a single year. One year. The report also notes that several frontier models now meet or exceed human performance on PhD-level science questions and competition mathematics.

That's not hype. That's a chart.

But here's the part buried right next to it, and the part I find genuinely fascinating: the same models that can win a gold medal at the International Mathematical Olympiad read analog clocks correctly about 50% of the time. Slightly worse than a coin flip. Researchers have a name for this. They call it the jagged frontier, and once you hear it, you can't stop seeing it everywhere.

AI is not a rising tide that lifts all boats equally. It's a mountain range with sharp peaks and deep valleys sitting right next to each other. It can solve a problem your PhD advisor couldn't, then fail at something a second-grader handles without thinking about it.

After 30 years of building things on the web, I find this oddly comforting. Not because the gaps aren't real, but because it means we're still in the part of the story where understanding the tool matters. Knowing where the edges are is a skill. It always has been.

The Part Nobody Wants to Talk About

I'm going to say the quiet part out loud.

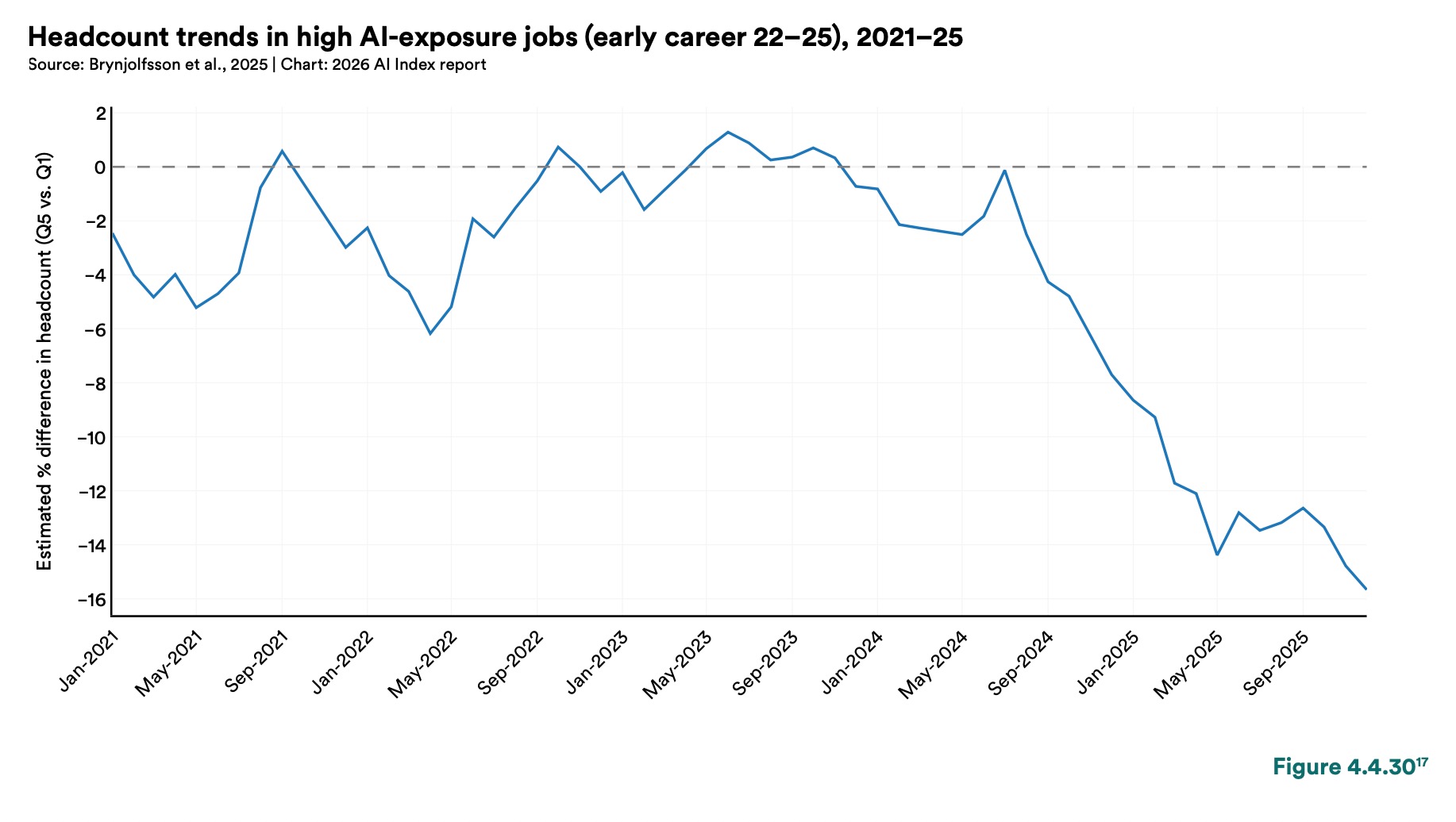

U.S. software developers between the ages of 22 and 25 saw employment fall nearly 20% in a single year. Meanwhile, employment for older developers kept growing.

The report frames this carefully, noting that productivity gains from AI are clearest in software development, where studies show improvements of 14% to 26%. It does not say those two facts are related. It doesn't have to.

I've been doing this long enough to remember when everyone said outsourcing would hollow out entry-level dev jobs, and it did, partially, for a while, and then the industry adapted. I've seen the same pattern play out with every wave of tooling. But this one feels different in speed and scope, and pretending otherwise does a disservice to the people just starting out.

The honest advice, if you're early in your career: learn the tools, yes, but also go deep on the judgment work. Architecture decisions. Stakeholder communication. The stuff that requires context you can only build over time. The report itself notes that AI's productivity gains are "weaker or negative" in tasks requiring more judgment. That's not a loophole. That's a direction.

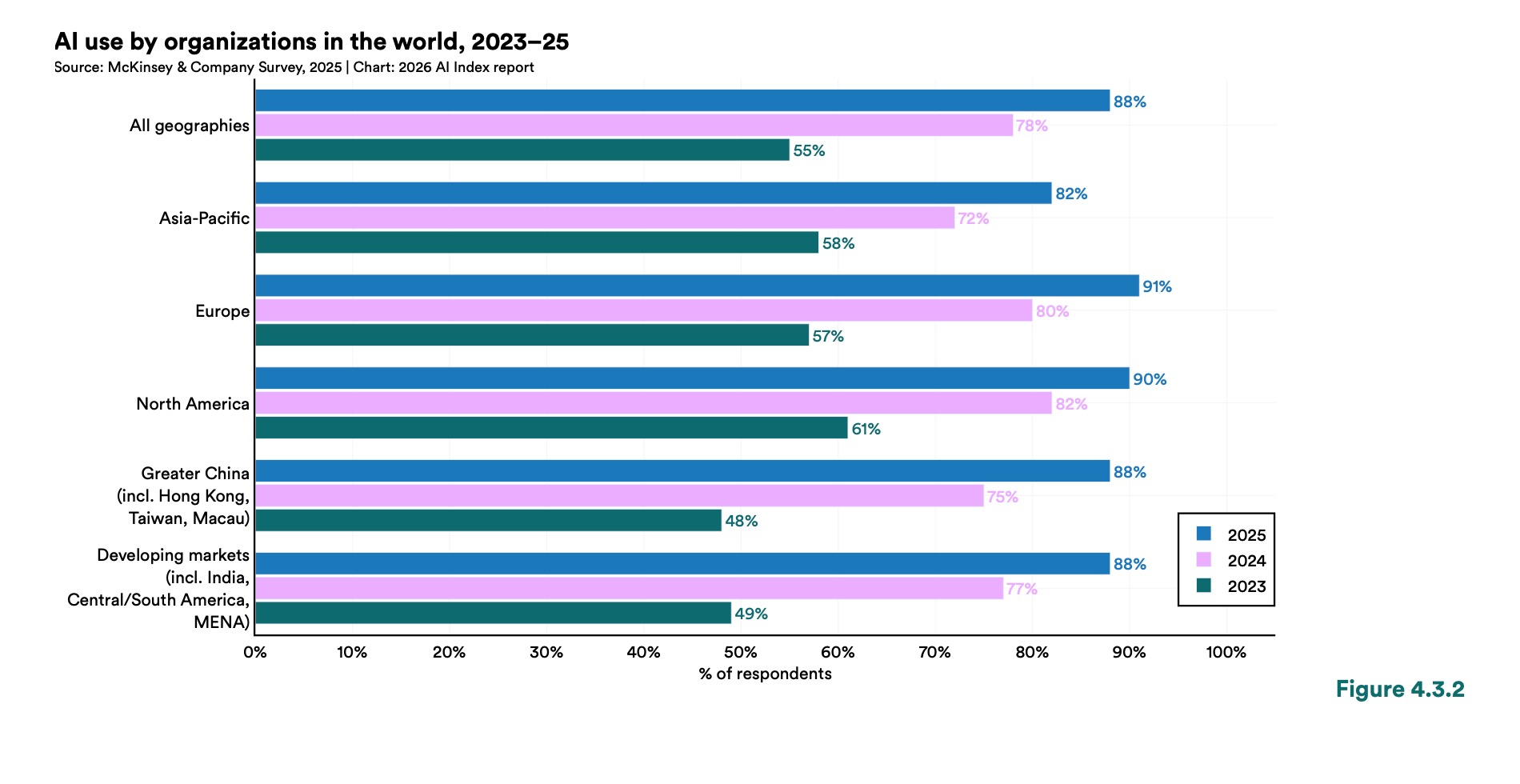

The Adoption Numbers Are Wild

Generative AI hit 53% population adoption in three years. For comparison, the PC took longer. The internet took longer.

The U.S. ranks 24th globally in consumer adoption at 28.3%, which surprised me. Singapore is at 61%. The UAE is at 54%. There's a strong correlation with GDP per capita, but some countries punch well above their weight, which suggests adoption is at least partly cultural and policy-driven, not just infrastructure.

88% of organizations have adopted AI in some form. 4 in 5 college students now use generative AI. The median value U.S. consumers get from generative AI tools tripled between 2025 and 2026, reaching an estimated $172 billion annually, for tools most people are using for free.

That last number is worth sitting with. Whatever you think about the AI industry's sustainability or its various problems, a lot of people are getting a lot of value out of this right now.

The Part That Should Worry Everyone

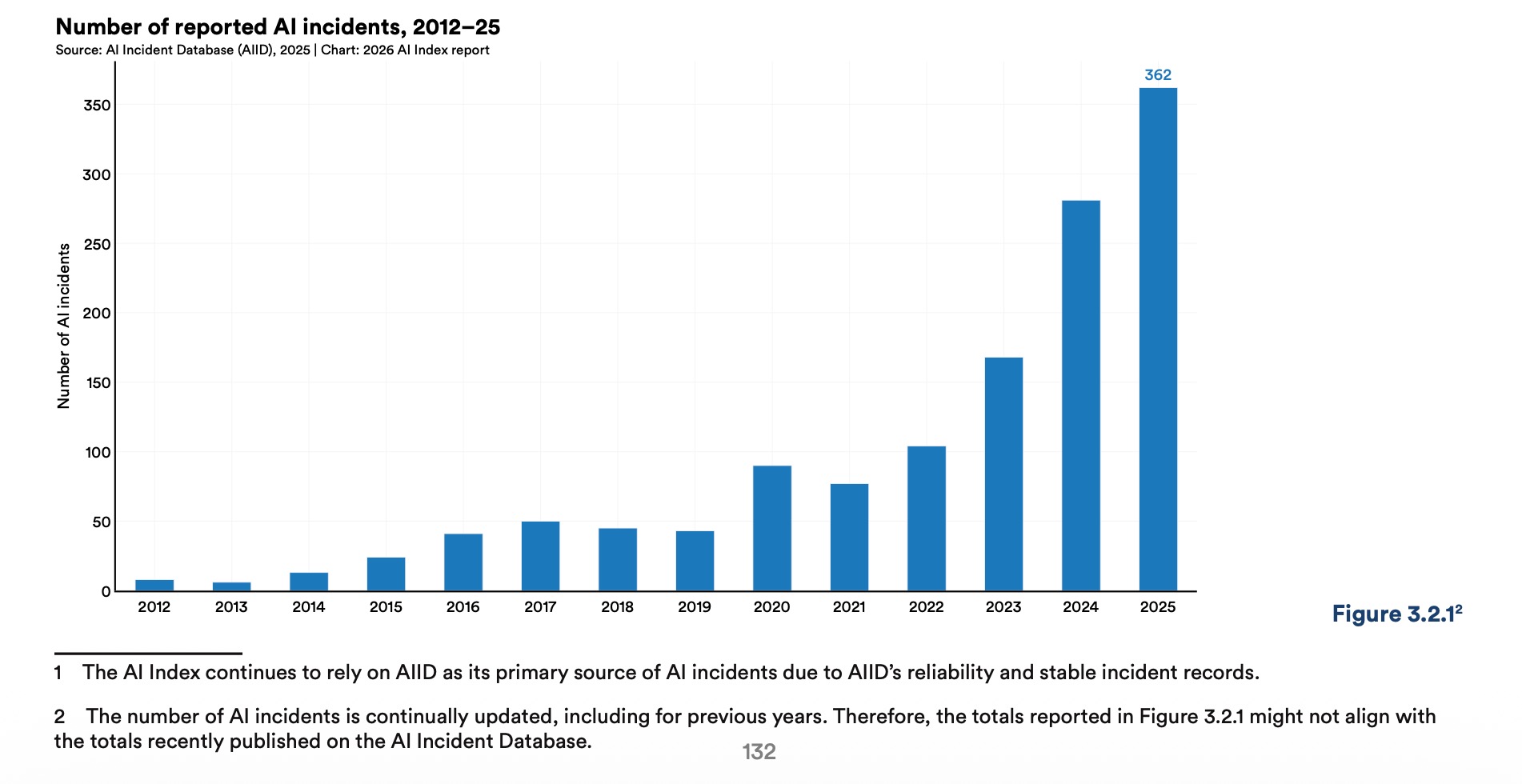

The report is measured in its language, but one section cuts through: responsible AI is not keeping pace with capability.

Documented AI incidents rose to 362 in the past year, up from 233 the year before. Almost every frontier model developer reports on capability benchmarks, but reporting on safety and responsibility benchmarks is, in the report's word, "spotty."

There's also a genuinely frustrating research finding buried in there: improving one responsible AI dimension, like safety, can actively degrade another, like accuracy. These aren't separate dials you can turn independently.

I don't think this means AI is doomed or dangerous in some sci-fi sense. I think it means we're building very fast and measuring very selectively, and that's a pattern the web industry has seen before. Usually not with great outcomes.

The web in the early 2000s moved fast because the economic incentive to ship outweighed the cost of getting it wrong. For most of that period, getting it wrong meant a broken form submission or a layout that fell apart in IE5. The stakes of getting AI wrong are larger by a meaningful margin, and the feedback loop between deployment and consequence is much harder to observe.

One More Thing

The gap between what AI experts believe and what the general public believes is enormous. 73% of experts expect AI to have a positive impact on how people do their jobs. 23% of the public agrees. That's a 50-point gap, and it's not closing.

I think both groups are partially right. The tools are genuinely remarkable and the disruption is genuinely real, and neither of those things cancels the other out. The experts see the capability curve and extrapolate forward. The public sees the job market and the incident count and draws its own conclusions. Both sets of data are real.

What I keep coming back to, after 30 years of watching technology waves come in, is that the people who do best in these transitions are the ones who spend less time arguing about whether the wave is coming and more time figuring out how to surf it. The wave is already here. The question is just what you do next.

The 2026 AI Index Report is published by the Stanford Institute for Human-Centered Artificial Intelligence. It's free and publicly available at hai.stanford.edu/ai-index if you want to go deeper on any of this.

Written by

UI developer and federal design systems engineer in Florida. I build accessible interfaces for federal health infrastructure and the open web. More at peterbenoit.com.